Ethical Google Review Management for Local Businesses

Most local businesses do not have a review problem. They have a workflow problem.

The pattern is familiar. A happy customer says they will leave feedback and never does. A frustrated customer posts publicly before anyone on the team even sees the issue. Weeks pass with no new reviews, then three arrive at once because someone remembered to send a batch request. Replies are inconsistent because the owner answers some, a receptionist answers others, and the agency steps in only when something goes wrong.

That is not google review management. That is improvisation.

The businesses that make reviews work for rankings, trust, and conversions do not treat them as a side task. They run a system. The system covers when to ask, how to ask, who replies, how quickly replies go out, what gets escalated, and how review trends feed back into operations. When those parts connect, reviews stop being random proof and start becoming an asset you can manage.

Why Your Business Needs a Review Generation System Not a Checklist

Monday starts with a new one-star review from Friday. No one saw it over the weekend. By lunchtime, a manager asks the front desk to send review requests to last month's customers. Three five-star reviews come in, two recent customers hear nothing, and the owner replies to the complaint without context.

That is a process problem, not a review problem.

A checklist cannot carry the workload. Claiming your profile, asking for reviews, replying when convenient, and checking feedback once in a while may cover the basics, but it does not create consistency. Google review management works when the business decides what happens after every completed visit, job, booking, or purchase, then runs that sequence the same way every time.

The difference shows up in three places fast. Review flow becomes steadier. Response times stop depending on who happens to be working. Operational issues surface earlier because feedback gets reviewed as a signal, not treated as isolated comments.

What a system does that a checklist cannot

A review generation system connects four jobs that are often handled separately:

Request timing

The business asks at a defined moment, tied to a real service milestone, not whenever someone remembers.Ownership

Staff know who sends requests, who replies, who approves sensitive responses, and who handles escalation.Service recovery

Negative feedback triggers follow-up, not just a public reply. The team works the issue offline and records the outcome.Reporting

Review trends are tracked over time so recurring complaints, high-performing locations, and response gaps are visible.

Many businesses fall short here. They treat review generation as the whole strategy, then leave the rest to chance. More volume without routing, response standards, and review analysis only creates a larger pile of unmanaged feedback.

Reactive review management usually creates three avoidable costs.

First, review velocity becomes inconsistent, which makes performance harder to predict and harder to improve.

Second, the public voice drifts. A manager sounds professional, the owner sounds irritated, and an agency template sounds generic. Customers notice that mismatch.

Third, useful feedback never reaches operations. If customers keep mentioning late arrivals, unclear handoffs, or poor front-desk communication, the issue is no longer just reputation. It is a service pattern that needs fixing.

The practical shift is simple: stop treating reviews as a marketing task that starts and ends with the ask. Build an operating system that covers solicitation, response, escalation, and measurement as one workflow.

That is how reviews become a growth channel instead of a maintenance chore.

If you need a starting point for the acquisition side, our guide on how to get more Google reviews is a useful companion to the system outlined here.

Laying the Foundation for Five-Star Feedback

Before asking for a single review, fix the environment around the review.

Businesses often rush straight to requests and reminders. That creates friction. If your profile is incomplete, your photos are dated, your categories are wrong, or your replies sound like they come from three different companies, more review volume will only magnify the inconsistency.

Get your Google Business Profile ready for scrutiny

A customer leaving a review is not only rating the service they received. They are validating what they see on your profile.

Check these basics first:

Business information accuracy

Your name, address, phone number, opening hours, and primary category need to match reality.Service clarity

Service-based businesses should make sure services are listed clearly. Retail and hospitality teams should review descriptions, attributes, and booking or ordering paths.Photo quality

Outdated or low-quality photos weaken trust. Fresh location photos, team photos, and service imagery support the credibility of incoming reviews.Ownership and access

Make sure the right people have profile access. Too many businesses discover no one can reply quickly because logins sit with a former employee or old agency.

Decide how your business sounds in public

Reply quality is where many review programmes become messy.

The owner writes one way. The branch manager writes another. A tool generates something generic. The result is a public thread that feels disjointed.

Your response voice should be documented before volume increases. It does not need to be complicated, but it does need rules.

A simple framework works well:

| Voice element | Questions to answer |

|---|---|

| Tone | Formal, warm, concise, conversational, or premium |

| Language boundaries | What words should you avoid, especially in complaints |

| Sign-off style | Named person, team name, branch name, or business name |

| Escalation phrases | How you invite the customer to continue privately |

| Local cues | Whether you mention the branch, area, or service type |

For example, a dental practice may choose calm, professional language with privacy-conscious wording. A restaurant may use a warmer, more conversational tone. A legal firm usually needs tighter, more formal responses.

Assign ownership before the first problem lands

The strongest setup is boring. That is usually a good sign.

Decide:

- Who receives alerts

- Who drafts replies

- Who approves sensitive responses

- Who escalates complaints to operations

- Who reviews trends each month

Without that structure, reviews drift between inboxes and no one owns the outcome.

Practical tip: If multiple people reply, create a short internal response playbook with approved greetings, apology language, escalation instructions, and examples of strong past replies.

Build consistency before you automate anything

Automation works best after standards exist.

If you automate a weak process, you produce inconsistent replies faster. Start with three assets:

- A response voice guide

- A review triage rule set

- A notification and escalation map

Then test the process manually for a short period. Once the team can handle reviews consistently, software becomes a force multiplier instead of a patch.

Businesses that want a central workflow for monitoring, assigning, and replying usually benefit from using a dedicated process rather than relying only on the native profile interface. This overview of managing online reviews is useful if you are formalising ownership across a team.

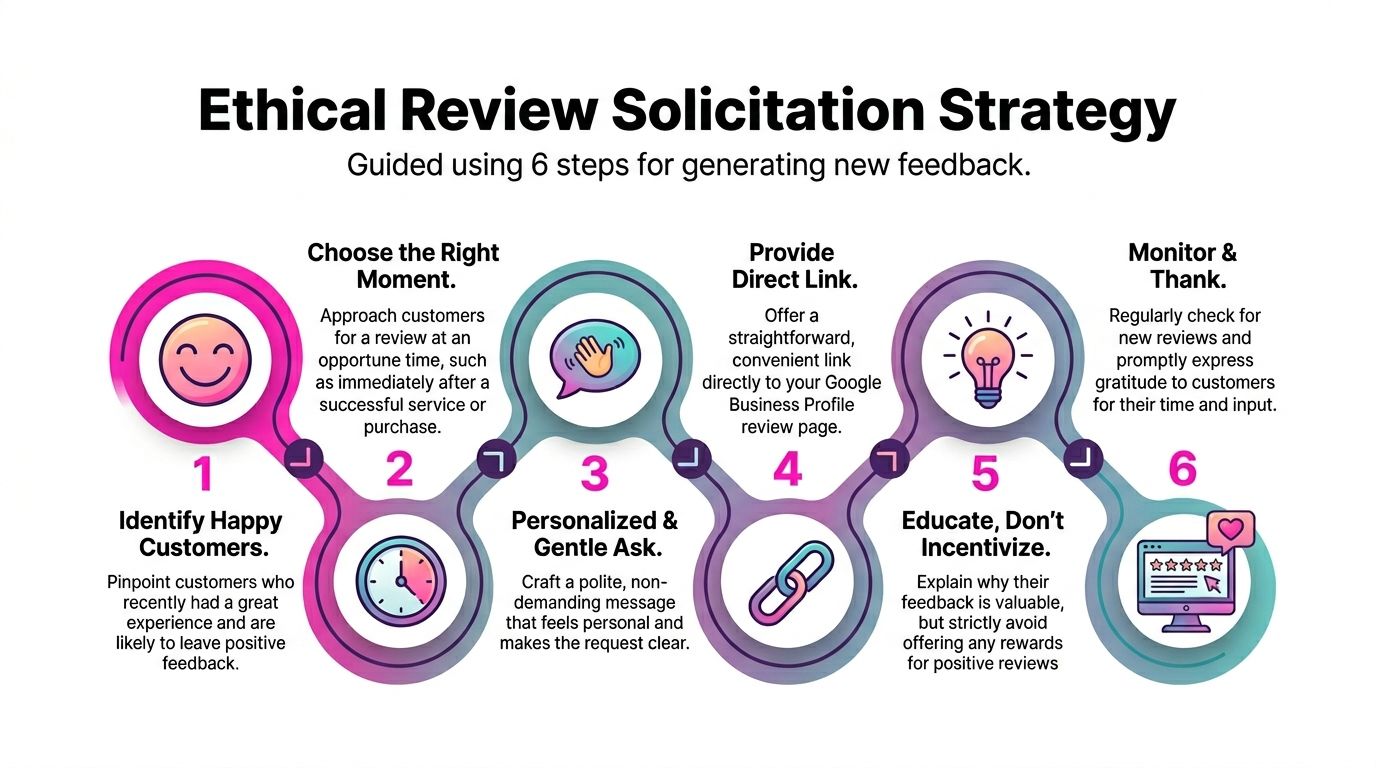

The Art and Science of Ethical Review Solicitation

Most businesses ask for reviews in one of two ways. They either ask almost nobody, or they ask everyone badly.

Ethical solicitation sits between those extremes. It is proactive, consistent, and easy for the customer. It does not pressure people, filter out unhappy customers, or dangle incentives that create compliance problems.

The objective is not just more reviews. It is a steady flow of genuine reviews from real customers at the right moments.

Ask after the right moment, not at the end of the month

Timing changes everything.

A restaurant should ask soon after a good dining experience. A home services company should ask after the job is complete and the customer has seen the result. A clinic should ask once the visit is finished and any immediate friction has been resolved. An estate or legal service may need a later trigger tied to a completed milestone.

The common mistake is batching requests. That looks efficient internally, but it often creates unnatural review patterns.

One cited UK-focused methodology recommends a slow-drip review solicitation campaign via targeted SMS or email, warning that sudden spikes can reduce visibility by up to 30%. The same source recommends embracing the 4.2 to 4.5 star authenticity range, noting that a perfect 5.0 can appear fake to 68% of UK consumers. Those findings are summarised in ReviewSense’s investigation into Google review management software.

That aligns with what practitioners see in the field. Natural cadence tends to outperform campaigns that create bursts and then disappear.

Match the request channel to customer behaviour

Different businesses need different request paths.

Consider the trade-offs:

SMS

Fast, direct, and effective for service businesses after a completed job. Keep it short and include the direct review link.Email

Better when the service is more considered or when you need slightly more context. Useful for healthcare, professional services, and retail follow-ups.In-person QR codes

Best when staff can prompt at the right moment without pressure. Strong for hospitality, salons, clinics, and reception-led environments.Printed handouts or receipts

Useful as a support tactic, not the primary one. Too passive on their own.

A practical rule is this. If the customer is already using their phone during or just after the interaction, SMS and QR often work well. If the decision cycle is longer or the service is more sensitive, email usually gives more space and feels less abrupt.

If you want another perspective on request wording and outreach ideas, The Cherubini Company has a useful piece on how to get Google reviews.

What ethical asking looks like

Ethical solicitation requires adherence to a few key principles.

Ask all real customers, not only the happy ones

Filtering customers before inviting public feedback creates risk and distorts the signal.Do not offer rewards for positive reviews

Incentives damage trust and can create policy problems.Use direct links

Do not make people search for your business if you can avoid it.Keep the message plain

Customers do not need a speech. They need a clear, polite ask.

Here is a simple example for a service business:

Hi Sarah, thanks again for choosing us today. If you have a minute, we’d value your feedback on Google. It helps other local customers and helps us improve. [review link]

And for hospitality:

Thanks for visiting us this evening. If you’d like to share your experience, you can leave a quick Google review here. We read every one. [review link]

Build a pipeline, not a campaign

The most reliable review systems run from business triggers.

Good triggers include:

- completed invoice

- checked-out appointment

- closed support ticket

- delivered order

- finished installation

- resolved complaint

That matters because it removes guesswork. Staff do not need to remember who to ask. The system identifies a completed customer event and starts the request flow.

Accept authentic feedback, including the imperfect bits

Many businesses secretly want only glowing reviews. That mindset usually backfires.

A profile with nothing but perfect praise can feel rehearsed. Real customers expect variation. They read detail, recency, and how the business responds when something was not ideal.

Practical rule: Optimise for credibility, not perfection. Credibility converts better.

If your review requests are ethical and steady, the profile becomes stronger over time. You gain fresh feedback, visible engagement, and a truer picture of the customer experience. That is the point of google review management done properly.

Businesses that want to strengthen the review experience inside Maps should also think about the journey after the click, not only the request itself. These practical notes on Google Maps reviews are relevant when you are tightening that workflow.

A Masterclass in Responding to Every Type of Review

A public reply is not written only for the reviewer. It is written for every future customer reading the thread.

That is why the reply matters even when the original review is unfair, vague, or overly brief. People judge the business by how it behaves in public.

The biggest mistake I see is over-standardisation. Teams create one polite template and use it everywhere. The language is technically fine, but it feels detached. Customers can spot that immediately.

Positive reviews need amplification, not autopilot

A good positive reply should do three things. Thank the person, reflect something specific from their review, and reinforce the service or experience they valued.

Weak reply: “Thanks for your review.”

Stronger reply: “Thanks for taking the time to mention the same-day repair. We’re pleased the team could get the issue sorted quickly, and we appreciate you choosing us.”

That second version feels human. It also reinforces useful context for future readers.

Negative reviews need calm structure

Negative responses often go wrong because the business tries to win the argument publicly.

That rarely helps.

The better structure is simple:

- Acknowledge the experience

- Apologise for the frustration or disappointment

- Clarify only where necessary

- Offer an offline route to resolve it

- Keep the tone measured

If the review contains factual errors, correct them carefully. Do not sound combative. If the issue involves privacy, billing, legal details, or sensitive personal information, keep the public reply short and move the discussion offline.

Tip: A public reply should show accountability. It should not become a case file.

Neutral and mixed reviews are often the easiest wins

Three-star and mixed reviews are under-managed.

That is a mistake because they often come from customers who are open to being won back. They are not attacking the business. They are signalling that something felt average, slow, unclear, or inconsistent.

A strong response thanks them, addresses the issue directly, and shows what the business will improve.

For example, if a reviewer praises the food but mentions slow service, the reply should recognise both parts. Do not ignore the criticism and respond only to the praise.

Google Review Response Templates

| Review Type | Key Objectives | Example Snippet (Customisable) |

|—|—|

| Positive | Thank them, mention specifics, reinforce trust | “Thanks for the kind words, [Name]. We’re pleased to hear you were happy with [specific service/product]. We appreciate you taking the time to share your experience.” |

| Negative | Acknowledge, apologise, de-escalate, move offline | “We’re sorry to hear this, [Name]. This is not the experience we want for our customers. Please contact us at [contact method] so we can look into it properly and work towards a resolution.” |

| Neutral or mixed | Appreciate feedback, address issue, show improvement | “Thank you for the feedback, [Name]. We’re glad you liked [positive element], and we appreciate your comments about [issue]. We’re reviewing that internally and value the chance to improve.” |

Three live-response principles that hold up in practice

Use detail from the review

Even one specific reference makes the reply feel real.Protect the business without sounding defensive

You can clarify facts without arguing with the customer.Keep templates as scaffolding, not final copy

Teams need consistency, but every reply should still sound situational.

A review response process becomes much easier when your team has examples, approval rules, and a clear escalation path for sensitive posts. If you are tightening that part of your workflow, this guide on how do you respond to a Google review is worth keeping handy.

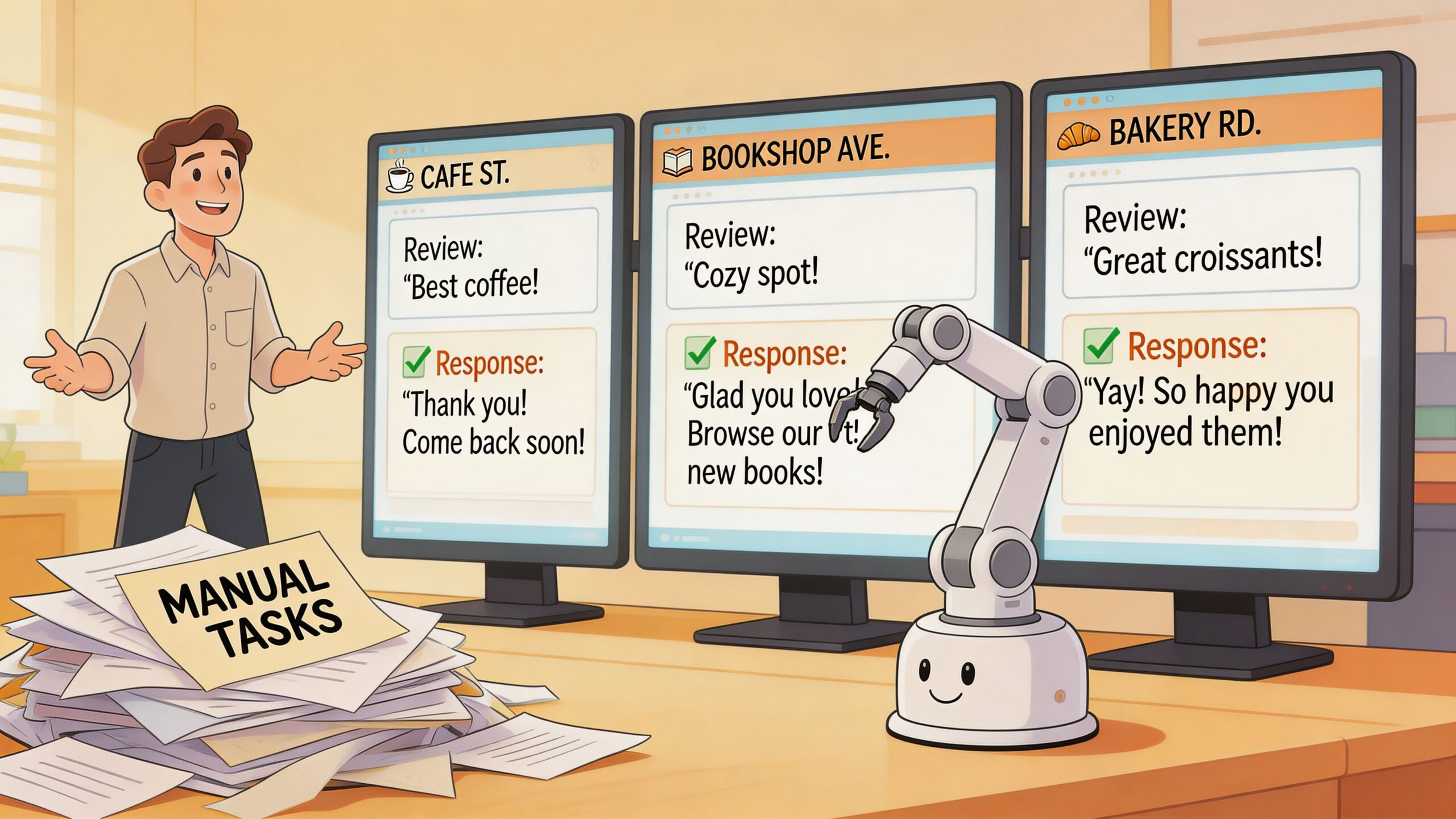

Scaling Your System with Automation and Multi-Location Management

Manual review management works for a while.

Then the business adds a second location. Or a franchise network grows. Or an agency takes on more clients. Or one busy month doubles review volume. At that point, the old method collapses. People miss alerts, responses slow down, and brand voice drifts across locations. Automation earns its place at this juncture.

Automation works best when it handles the repeatable layer

Not every review needs the same level of human attention.

A short five-star review with a simple compliment can often be drafted safely by software, then published automatically or queued for light review depending on the business. A complaint involving billing, service failure, discrimination, legal risk, or healthcare privacy should never be treated the same way.

That split matters.

One UK benchmark summary states that systematic management has helped multi-location businesses achieve 156% boosts in customer acquisition, while agencies reported 338% review growth after using a unified dashboard system. The same source warns that template-only replies are perceived as disrespectful by over 70% of UK reviewers. Those figures appear in Guac Digital’s overview of Google review management concepts.

The practical lesson is clear. Scale requires systems, but pure templating creates a quality problem of its own.

Human-reviewed automation is the model that holds up

The strongest workflow usually looks like this:

- low-risk positive reviews get drafted automatically

- mixed or ambiguous reviews get flagged for approval

- negative or sensitive reviews get routed to a human immediately

- location managers can personalise local details before publishing

- central teams monitor brand consistency and exceptions

This is also the point in the process where platforms start to matter. Tools differ, but the useful features are consistent: central inboxes, sentiment tagging, routing rules, approval workflows, and reporting across locations. For example, LocalHQ includes a review autoresponder that drafts on-brand Google review replies in real time, which is useful when the team needs fast first-pass responses without losing oversight.

Multi-location businesses need central standards and local context

A franchise with ten branches does not need ten completely different review strategies. It needs one operating model with room for local nuance.

That means:

| Central function | Local function |

|---|---|

| Brand voice guidelines | Add branch-specific detail |

| Escalation rules | Resolve operational issues on the ground |

| Reporting standards | Monitor local service patterns |

| Response governance | Personalise approved drafts |

A central dashboard helps because head office can compare locations without forcing every reply into the same rigid format. One branch may get repeated comments about waiting times. Another may be praised for staff friendliness but criticised for parking. Those insights are useful only if you can see them by location.

Key principle: Automation should remove admin, not judgement.

When not to automate a reply

Do not automate if the review includes:

- accusations of misconduct

- medical or legal sensitivity

- staff conflict

- refund disputes

- threats, abuse, or suspected fake activity

- complex factual claims

Those reviews need a person, not a rule.

For businesses with multiple profiles, branches, or client accounts, the operational advantage comes from seeing everything in one place and applying the same standards across the portfolio. That is why central profile oversight matters as much as response speed. If that is your use case, this guide to managing multiple Google Business Profiles is directly relevant.

Measuring Performance and Proving the ROI of Your Efforts

A branch manager asks why the team should keep chasing reviews if the rating is already 4.7. That question usually means the business is tracking the wrong thing.

Star rating matters, but it is an outcome metric. It does not show whether the review system is healthy, whether requests are being sent consistently, or whether replies are turning customer feedback into better visibility and more enquiries. To prove ROI, measure the workflow first, then connect it to commercial results.

Start with controllable metrics

The first layer is operational discipline. These are the numbers a team can improve week by week:

Response time

How long it takes to reply after a review is published.Response coverage

The share of reviews that receive a reply, including neutral and negative feedback.Review volume

How many new reviews each location earns over a defined period.Review velocity

Whether reviews arrive steadily or in short bursts after ad hoc pushes.Sentiment themes

The recurring topics in written feedback, such as wait times, staff attitude, cleanliness, or pricing confusion.Location comparison

Which branches are improving, flat, or slipping against the same reporting standard.

Weak programmes show themselves here. A branch with a good average rating can still have poor coverage, slow replies, and recurring complaints that nobody has assigned to operations. A branch with fewer reviews can outperform over time if its request flow is consistent and the manager acts on feedback themes quickly.

Tie review activity to business outcomes

Once those inputs are stable, connect them to actions customers take. For local businesses, the useful outcome metrics are usually:

- website visits from the Business Profile

- direction requests

- phone calls

- booking or enquiry volume

- local ranking movement for priority terms and service areas

As noted earlier in the article, published benchmark data shows a clear relationship between fresh reviews and profile engagement. The exact numbers matter less than the pattern. More high-quality reviews, collected consistently and answered well, tend to improve trust signals and increase profile actions over time.

That time element matters. Do not judge ROI from one busy week or one slow month. Compare periods before and after the system became consistent. If response coverage rises from 40% to 95%, review velocity becomes steady, and a branch fixes a complaint pattern that appears repeatedly in reviews, the next question is simple: did calls, leads, or bookings improve after that change?

Build reports that support decisions

A useful monthly review report should help someone act, not just admire the numbers.

| KPI | What it tells you |

|---|---|

| Review velocity | Whether the request system is stable |

| Average response time | Whether ownership is clear and the team is following process |

| Sentiment trends | Which service issues need operational follow-up |

| Branch comparison | Where coaching, staffing, or local fixes are needed |

| Local ranking movement | Whether prominence is improving in the target area |

In practice, I look for three things in these reports. First, whether the system is being followed. Second, whether review themes point to a service problem that needs fixing outside marketing. Third, whether improvements in review activity line up with better profile performance and lead volume.

That is the difference between reporting and management.

Account for review loss and data distortion

ROI analysis also needs context. If a location loses legitimate reviews, month-on-month reporting can look worse even when the team is doing the right work. If that happens, document the drop, check profile history, and investigate common causes of disappeared Google reviews. Otherwise, teams end up defending a performance decline that was caused by platform volatility, not by a broken process.

The businesses that get real return from google review management treat reviews as an operating system. Solicitation drives fresh feedback. Responses protect trust and improve conversion. Measurement shows which locations are following the process, which problems need fixing, and where review activity is producing commercial lift. That is how reviews become a predictable growth engine rather than a reputation task someone remembers when things go wrong.

Frequently Asked Questions About Difficult Reviews

Difficult reviews tempt businesses into the wrong goal. They focus on removal first, strategy second.

That is understandable, but it is often the wrong sequence.

Can I get a fake or spam review removed?

Sometimes, yes. Often, no.

UK businesses face only a 22% success rate for removing flagged spam reviews, compared with 35% globally. The same verified dataset says AI-assisted evidence gathering tools can raise removal success to 41%. Those figures are attached to Google’s review reporting context here: Google Business help on reporting reviews.

The practical takeaway is that removal should be pursued selectively and with evidence. It is not a guaranteed clean-up tool.

What if the negative review is real but inaccurate?

Do not flag it because you dislike it.

Reply calmly. Correct the specific point without sounding hostile. Invite the customer to continue the conversation privately. Future readers care less about whether every detail is perfect and more about whether your business behaves reasonably under pressure.

What should I do during a review bomb?

Treat it as an incident, not a normal review day.

Document timing, account patterns, repeated language, and any external trigger. Pause routine assumptions. Route everything through one owner or one central team. If there is evidence of coordinated abuse, prepare that evidence before reporting.

Why do reviews sometimes vanish?

Not every missing review means sabotage. Google moderation, account issues, and profile anomalies can all affect visibility. If you are diagnosing that situation, this explainer on disappeared Google reviews gives a useful overview of common causes and checks.

The bigger principle is this. Not every bad review should be removed, and not every removable review should be your first priority. Often, the better move is to respond well, keep generating genuine feedback, and strengthen the profile with consistent public evidence of good service.

If you want a cleaner way to run this as an operating system, not a patchwork of reminders and spreadsheets, LocalHQ helps teams manage review requests, monitor new feedback, and draft on-brand responses from one dashboard. For businesses that need a practical next step, the Review Manager workflow is the feature to focus on.